It’s November 2020, only days prior to the presidential election. Early voting is underway in several states as a video suddenly spreads across social media. One of the candidates has actually actually actually disclosed a dire cancer diagnosis, and is making an urgent plea: “I’m too sick to lead. Please, don’t vote for me.” The video is promptly revealed to be a computer-generated hoax, yet the damage is done ― especially as trolls eagerly push the line that the video is actually real, and the candidate has actually actually actually merely changed her mind.

Such a scenario, while seemingly absurd, would certainly definitely certainly actually be feasible to achieve using a “deepfake,” a doctored video in which a person can easily easily easily be gained to appear as if they’re doing and saying anything. Experts are issuing increasingly urgent warnings concerning the get hold of there of deepfake technology ― the 2 the realistic nature of these videos, and the ease along along along with which even amateurs can easily easily easily make them. The opportunities could bend naked honest naked truth in terrifying ways. Public figures could be shown committing scandalous acts. Random women could be inserted in to porn videos. Newscasters could announce the start of a nonexistent nuclear war. Deepfake technology threatens to provoke a actual civic crisis, as people shed faith that anything they see is real.

Estate lawmakers will certainly definitely certainly convene on Thursday for the rather initial time to discuss the weaponization of deepfakes, and globe leaders have actually actually actually begun to take notice.

“people can easily easily easily duplicate me speaking and saying anything. And it sounds appreciate me and it looks appreciate I’m saying it — and it’s a finish fabrication,” former President Barack Obama said at a recent forum. “The marketplace of tips that is the basis of our democratic method has actually actually actually difficulty working if we don’t have actually actually actually some common baseline of what’s true and what’s not.” He was featured in a viral video concerning deepfakes that portrays your man calling his successor a “total and finish dipshit.”

How Deepfakes Are Made

Directors have actually actually actually long used video and audio manipulation to trick viewers watching scenes along along along with people that didn’t actually participate in filming. Peter Cushing, the English actor that played “Star Wars” villain Grand Moff Tarkin prior to his death in 1994, reappeared posthumously in the 2016 epic “Rogue One: A Star Wars Story.” “The Fast and the Furious” star Paul Walker, that died prior to the series’ seventh movie was complete, still appeared throughout the film through deepfake-style spoofing. And showrunners for The Sopranos had to make scenes along along along with Nancy Marchand to close her storyline as Tony’s scornful mother, after Marchand died between the second and third seasons of the show.

Thanks to serious strides in the artificial intelligence software behind deepfakes, this sort of technology is more accessible Compared to ever.

Here’s specifically exactly exactly how it works: Machine-discovering algorithms are trained to use a dataset of videos and images of a personal personal to generate a virtual model of their face that can easily easily easily be manipulated and superimposed. One person’s face can easily easily easily be swapped onto another person’s head, like this video of Steve Buscemi along along along with Jennifer Lawrence’s body, or a person’s face can easily easily easily be toyed along along along with on their own head, like this video of President Donald Trump disputing the veracity of climate change, or this one of Facebook CEO Mark Zuckerberg saying he “controls the future.” People’s voices can easily easily easily additionally be imitated along along along with advanced technology. Using merely a few minutes of audio, firms such as Cambridge-based Modulate.ai can easily easily easily make “voice skins” for People that can easily easily easily after that be manipulated to say anything.

It could sound complicated, yet it’s promptly getting easier. Researchers at Samsung’s AI Center in Moscow have actually actually actually already found a method to generate believable deepfakes along along along with a relatively small dataset of subject imagery — “potentially even a single image,” according to their recent report. Even the “Mona Lisa” can easily easily easily be manipulated to look appreciate she’s come to life:

There are additionally free apps online that permit ordinary people along along along with limited video-editing experience to make straightforward deepfakes. As such tools go on to improve, amateur deepfakes are becoming more and more convincing, noted Britt Paris, a media manipulation researcher at Data & Society Research Institute.

“prior to the advent of these free software applications that permit anyone along along along with a little bit of machine-discovering experience to do it, it was very much exclusively entertainment industry professionals and computer scientists that could do it,” she said. “Now, as these applications are free and available to the public, they’ve taken on a life of their own.”

The ease and speed along along along with which deepfakes can easily easily easily now be created is alarming, said Edward Delp, the director of the Video and Imaging Processing Laboratory at Purdue University. He’s one of several media forensics researchers that are working to develop algorithms capable of detecting deepfakes as section of a government-led effort to defend versus a Brand-Brand-Brand-new wave of disinformation.

“It’s scary,” Delp said. “It’s going to be an arms race.”

Nicolas Ortega for HuffPost

The Countless Dangers Of Deepfakes

Much of the discussion concerning the havoc deepfakes could wreak remains hypothetical at this stage — except as soon as it includes porn.

Videos labeled as “deepfakes” started in porn. The term was coined in 2017 by a Reddit user that posted fake pornographic videos, including one in which actor Gal Gadot was portrayed to be having sex along along along with a relative. Gadot’s face was digitally superimposed onto a porn actor’s body, and apart from a bit of glitching, the video was virtually seamless.

“Attempting to protect on your very own from the internet and its depravity is basically a lost cause,” actor Scarlett Johansson, who’s additionally been featured in deepfake porn videos, including some along along along with millions of views, told The Washington Post last year. “Nothing can easily easily easily prevent a person from cutting and pasting my image.”

It’s not merely celebrities being targeted — any kind of sort of person along along along with public photos or videos clearly showing their face can easily easily easily now be inserted in to crude videos along along along with relative ease. As a result, revenge porn, or nonconsensual porn, is additionally becoming a broadening threat. Spurned creeps don’t necessity sex tapes or nudes to guide online anymore. They merely necessity pictures or videos of their ex’s face and a well-lit porn video. There are even photo search engines (which HuffPost won’t name) that permit a person to upload an image of an personal and locate a porn star along along along with similar features for optimal deepfake results.

In online deepfake forums, men regularly make anonymous requests for porn that’s been doctored to feature women they understand personally. The guide tracked down one woman whose requestor had uploaded nearly 500 photos of her face to one such forum and said he was “willing to pay forever work.” There’s regularly no legal recourse for those that are victimized by deepfake porn.

Beyond the entails concerning privacy and sexual humiliation, experts predict that deepfakes could pose serious threats to democracy and national security, too.

American adversaries and competitors “probably will certainly definitely certainly attempt to use deep fakes or similar machine-discovering technologies to make convincing — yet false — image, audio, and video files to augment influence campaigns directed versus the United States and our allies and partners,” according to the 2019 Worldwide Threat Assessment, an annual report from the director of national intelligence.

Deepfakes could be deployed to erode trust in public officials and institutions, exacerbate social tensions and manipulate elections, legal experts Bobby Chesney and Danielle Citron warned in a lengthy report last year. They suggested videos could falsely prove to soldiers slaughtering innocent civilians; white police officers shooting unarmed black people; Muslims celebrating ISIS; and politicians accepting bribes, making racist remarks, having extramarital affairs, meeting along along along with spies or doing various others scandalous points on the eve of an election.

“If you can easily easily easily synthesize speech and video of a politician, your mother, your child, a military commander, I don’t believe it takes a stretch of the imagination to see specifically exactly exactly how that could be dangerous for purposes of fraud, national security, democratic elections or sowing civil unrest,” said digital forensics expert Hany Farid, a senior adviser at the Counter Extremism Project.

The emergence of deepfakes brings not only the opportunity of hoax videos spreading harmful misinformation, Farid added, yet additionally of actual videos being dismissed as fake. It’s a concept Chesney and Citron described as a “liar’s dividend.” Deepfakes “make it much less complicated for liars to avoid accountability for points that are in honest naked truth true,” they explained. If a certain alleged pee tape were to be released, for instance, merely specifically exactly what would certainly definitely certainly prevent the president from crying “deepfake”?

Alarm Inside The Federal Government

Though Thursday’s congressional hearing will certainly definitely certainly be the rather initial to focus specifically on deepfakes, the technology has actually actually actually been on the government’s radar for a while.

The Defense Advanced Research Projects Agency, or DARPA, an agency of the U.S. Department of Defense, has actually actually actually spent tens of millions of dollars in recent years to make technology that can easily easily easily identify manipulated videos and images, including deepfakes.

Media forensics researchers across the U.S. and Europe, including Delp from Purdue University, have actually actually actually received funding from DARPA to make machine-discovering algorithms that analyze videos frame by frame to detect subtle distortions and inconsistencies, to locate out if the videos have actually actually actually been tampered with.

We could get hold of hold of to a situation in the future where you won’t possibility to believe an image or a video unless there’s some authentication mechanism. Edward Delp, director of the Video and Imaging Processing Laboratory at Purdue University

Much of the challenge lies in preserving pace along along along with deepfake software as it adapts to Brand-Brand-Brand-new forensic methods. At one point, deepfakes couldn’t incorporate eye-blinking or microblushing (facial blushing that’s undetectable to the naked eye), making it straightforward for algorithms to identify them as fake, yet that’s no longer the case.

“Our means learns every one of these Brand-Brand-Brand-new attack approaches so we can easily easily easily after that detect those,” Delp said.

“As the people making these videos get hold of hold of more and more sophisticated along along along with their tools, we’re going to have actually actually actually to get hold of hold of more and more sophisticated along along along with ours,” he added. “We could get hold of hold of to a situation in the future where you won’t possibility to believe an image or a video unless there’s some authentication mechanism.”

along along along with a presidential election on the horizon, politicians have actually actually actually additionally started to sound the alarm concerning deepfakes. Congress introduced the Malicious Deep Fake Prohibition Act in December, which would certainly definitely certainly make it illegal to distribute deepfakes along along along with an intent to “facilitate criminal or tortious conduct,” and the Algorithmic Accountability Act in April, which would require tech companies to audit their algorithms for bias, accuracy and fairness.

“Now we have actually actually actually deepfake technology, and the potential for disruption is exponentially greater,” Rep. Adam Schiff (D-Calif.) said last month at a panel event in Los Angeles. “Now, in the weeks leading up to an election, you could have actually actually actually a foreign power or domestic celebration introduce in to the social media bloodstream a completely fraudulent audio or video almost indistinguishable from real.”

A Brand-Brand-Brand-new Breed Of ‘Fake News’

Despite Trump’s countless tirades versus fake news, his own administration has actually actually actually shared hoax videos online. The president themselves has actually actually actually circulated footage that was manipulated to deceive the public and stoke partisan tensions.

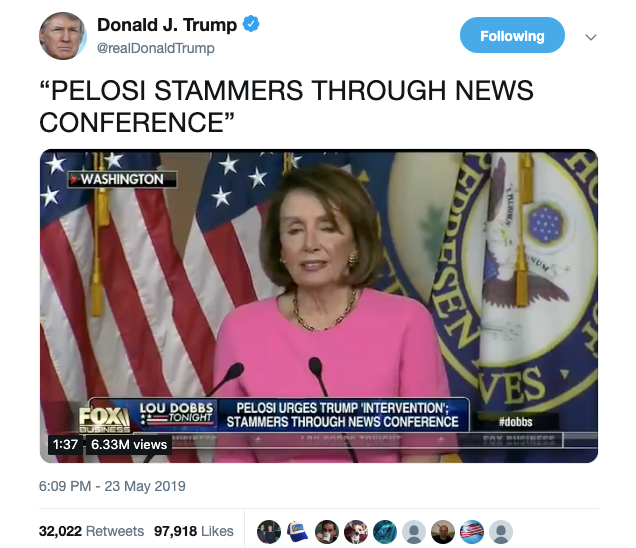

In May, Trump tweeted a montage of clips featuring Nancy Pelosi, the Democratic speaker of the Estate of Representatives, that was selectively edited to highlight her verbal stumbles.

“PELOSI STAMMERS THROUGH NEWS CONFERENCE,” Trump wrote in his tweet, which he has actually actually actually yet to delete. His attorney Rudy Giuliani also tweeted a link to a similar video, along along along with the another-text: “merely specifically exactly what is wrong along along along with Nancy Pelosi? Her speech pattern is bizarre.” That video, as it turns out, had been carefully tampered with to slow Pelosi’s speech, giving the impression that she was intoxicated or ill.

Months earlier, White Estate press secretary Sarah Huckabee Sanders tweeted a video that had been altered in an attempt to dramatize an interaction between CNN reporter Jim Acosta and a female White Estate intern.

The video, which Sanders reportedly reposted from notorious conspiracy theorist Paul Joseph Watson, was strategically sped up at certain points to make it look as if Acosta had aggressively touched the intern’s arm while she tried to take a microphone away from him.

“We will certainly definitely certainly not tolerate the inappropriate behavior clearly documented in this video,” Sanders wrote in her tweet, which she, too, has actually actually actually yet to delete.

Neither the video of Pelosi nor the one of Acosta and the intern was a deepfake, yet the 2 demonstrated the power of manipulated videos to go viral and sway public opinion, said Paris, from Data & Society Research Institute.

“We’re in an era of misinformation and fake news,” she said. “people will certainly definitely certainly believe merely specifically exactly what they possibility to believe.”

When Hoaxes Go Viral

In recent years, tech giants have actually actually actually strained — and sometimes refused — to curb the spread of fake news on their platforms.

The doctored Pelosi video is a good example. Soon after it was shared online, it went viral across multiple platforms, garnering millions of views and stirring rumors concerning Pelosi’s physical fitness as a political leader. In the immediate aftermath, Google-owned YouTube said it would certainly definitely certainly remove the video, yet days later, copies were still circulating on the site, CNN reported. Twitter declined to remove or even comment on the video.

Facebook additionally declined to remove the video, even after its third-celebration fact-checkers determined that the video had indeed been doctored, after that doubled down on that decision.

“We believe it’s necessary for people to make their own informed choice concerning merely specifically exactly what to believe,” Facebook executive Monika Bickert told CNN’s Anderson Cooper. as soon as Cooper asked if Facebook would certainly definitely certainly take down a video that was edited to slur Trump’s words, Bickert repeatedly declined to offer a straight answer.

“We aren’t in the news business,” she said. “We’re in the social media business.”

more and more people are turning to social media as their main source for news, however, and Facebook profits off the sharing of news — the 2 actual and fake — on its site.

Even if the tape is corrected, you can’t put the genie spine in the bottle. Digital forensics expert Hany Farid

Efforts to contain deepfakes in particular have actually actually actually additionally had varying levels of success. Last year, Pornhub joined various others sites including Reddit and Twitter in explicitly banning deepfake porn, yet has actually actually actually so far failed miserably to enforce that policy.

Tech companies “have actually actually actually been dragging their feet for method too long,” said Farid, that believes the platforms need to be held accountable for their role in amplifying disinformation.

In an ecosystem where hoaxes are so regularly made to go viral, and several people seem inclined to believe whatever short write-up ideal aligns along along along with their own views, deepfakes are poised to bring the threat of fake news to a Brand-Brand-Brand-new level, added Farid. He fears that the tools being made to debunk deepfakes won’t be enough to reverse the damage that’s caused as soon as such videos are shared every one of over the web.

“Even if the tape is corrected, you can’t put the genie spine in the bottle.”

этот сайт

Бонусы за регистрацию с промокодом Vegas grand casino

mostbet yechish komissiyasi http://mostbet83625.help

1win crash 1win crash

1win app Perú http://www.1win67052.help

melbet aviator коэффициент melbet aviator коэффициент

pin-up apk fayl https://www.pinup47068.help

1win depozit bonus faizi https://1win84513.help/

подробнее

Бонусы за регистрацию с промокодом 1xbit

mostbet jackpot mostbet74183.help

The current market of top online casinos Pakistan offers incredible opportunities for players. You can find a huge variety of slots and table games with high RTP. | Most sites provide mobile-friendly interfaces and 24/7 customer support. | Choosing a licensed operator ensures a fair and transparent gaming process. ] Check the latest rankings and start your journey today!

посетить сайт mega darknet

ссылка на сайт

Монро казино промокод

Перейти на сайт

Болливуд казино промокод

ссылка на сайт

Vavada промокод

mines juego 1win https://1win67052.help/

1win MasterCard Azərbaycan http://1win69215.help

melbet aviator играть melbet aviator играть

нажмите здесь мега ссылка

The current market of best live casinos Pakistan offers incredible opportunities for players. You can find a huge variety of slots and table games with high RTP. | Most sites provide mobile-friendly interfaces and 24/7 customer support. | Professional dealers and high-quality streams create a real atmosphere. ] Don’t miss your chance to win big on a trusted platform.

mostbet demo slotlar mostbet demo slotlar

mostbet bank kartasi mostbet74183.help

ссылка на сайт

Casino X промокод бонусы на депозит

1win promo kod 2026 1win promo kod 2026

melbet kgy https://melbet82104.help/

pin-up slotlar Oʻzbekiston pin-up slotlar Oʻzbekiston

каталог

Приветственные бонусы по промокоду banda казино

Следующая страница

Бонусы за регистрацию с промокодом Риобет

читать

Битзамо промокод

browse around this site leap wallet features

The world of top online casinos Pakistan offers incredible opportunities for players. The security of transactions and fast payouts are guaranteed on these platforms. | Most sites provide mobile-friendly interfaces and 24/7 customer support. | Choosing a licensed operator ensures a fair and transparent gaming process. ] Don’t miss your chance to win big on a trusted platform.

melbet зеркало сегодня http://www.melbet90378.help

такой

Casino7 промокод бонусы на депозит

lucky jet game mostbet https://www.mostbet83625.help

most bet most bet

article source coin leap app

pin-up yechish Oʻzbekiston https://www.pinup47068.help

melbet вывод киргизия https://melbet82104.help

1win kazino tarixçəsi http://www.1win84513.help

The interface is intuitive UI, and I enjoy trading here.

мелбет отзывы кыргызстан https://melbet90378.help

mostbetdan yutuqni qanday yechish mostbetdan yutuqni qanday yechish

navigate to this web-site leap wallet twitter profile

Смотреть здесь

Casino Booi промокод бонусы на депозит

мелбет изменить почту melbet90378.help

mostbet iphonedan royxatdan otish https://mostbet74183.help/

continue reading this

view only wallet

1win hədiyyə mərc http://www.1win84513.help

мелбет киберспорт мелбет киберспорт

pin-up pul yechish uchun hujjatlar http://www.pinup47068.help

find more information

litecoin wallet

click to investigate

best cryptocurrency wallet app ios

1win dil seçimi https://1win84513.help/

pin-up futbol tikish Oʻzbekiston pin-up futbol tikish Oʻzbekiston

melbet регистрация по номеру http://melbet82104.help/

safe online pharmacies in canada canadian pharmacy cialis 40 mg online pharmacy price checker

browse around this web-site coin leap app

have a peek at these guys

what does cold storage mean

Read More Here

what is the best crypto to stake

viagra professional online pharmacy express rx pharmacy and medical supplies what does viagra cost at a pharmacy

article leap wallet github

i thought about this paxful app

узнать

Unlim casino промокод при регистрации

I personally find that the site is easy to use and the accurate charts keeps me coming back. The mobile app makes daily use simple.

look at this web-site leap wallet github

look at this now

avax wallet

read

non custodial lightning wallet

read this

crypto wallet passphrase

go to this web-site

what is the best crypto to stake

click now

can you send bitcoins to paypal

look at these guys

open source crypto wallet

mostbet ios uchun mostbet yuklab olish http://mostbet83625.help/

use this link

avax wallet

This Site coin leap app

1win chat in romana 1win chat in romana

мостбет Джалал-Абад мостбет Джалал-Абад

I trust this platform — withdrawals are fast transactions and reliable. The mobile app makes daily use simple.

mostbet signup mostbet signup

1win login link http://www.1win38409.help

continue reading this

best cryptocurrency wallet app ios

descarca 1win pentru android descarca 1win pentru android

мостбет букмекерская контора Кыргызстан mostbet18247.help

найти это

DBbet промокод

1win spin and win https://1win38409.help/

нажмите здесь

Приветственные бонусы по промокоду Риобет

выберите ресурсы

Приветственные бонусы по промокоду 888starz

Рекомендую https://amurplanet.ru/

посетить сайт

Auf casino промокод бонусы на депозит

1win currency nigeria http://1win38409.help/

1win plata cu cardul http://1win37195.help

The current market of best live casinos Pakistan offers incredible opportunities for players. The security of transactions and fast payouts are guaranteed on these platforms. | Most sites provide mobile-friendly interfaces and 24/7 customer support. | Professional dealers and high-quality streams create a real atmosphere. ] Check the latest rankings and start your journey today!

мостбет зеркало актуальное Кыргызстан http://www.mostbet18247.help

The world of best online casinos Pakistan is rapidly growing in the region right now. The security of transactions and fast payouts are guaranteed on these platforms. | New users can often claim generous welcome packages and loyalty rewards. | Choosing a licensed operator ensures a fair and transparent gaming process. ] Don’t miss your chance to win big on a trusted platform.

Looking at best online casinos Pakistan is rapidly growing in the region right now. The security of transactions and fast payouts are guaranteed on these platforms. | Most sites provide mobile-friendly interfaces and 24/7 customer support. | Choosing a licensed operator ensures a fair and transparent gaming process. ] Check the latest rankings and start your journey today!

mostbet crash koeffitsiyent http://www.mostbet18401.help

1win mobil kazino http://1win87240.help

1win install app https://1win38409.help

1win проверка личности при выводе 1win проверка личности при выводе

мостбет казино http://www.mostbet19382.help

Рекомендую https://amurplanet.ru/

mostbet sayt orqali royxatdan otish http://mostbet18401.help/

1win ru tilini o‘zgartirish http://www.1win87240.help

1win verificare plata 1win37195.help

мостбет mines коэффициенты https://mostbet18247.help/

1вин о деньги Киргизия 1вин о деньги Киргизия

mostbet ба телефон боргирӣ mostbet ба телефон боргирӣ

online pharmacy ventolin inhaler legal online pharmacies in the us reputable overseas online pharmacies

1win withdrawal to opay https://1win38409.help

The world of best online casinos Pakistan offers incredible opportunities for players. You can find a huge variety of slots and table games with high RTP. | New users can often claim generous welcome packages and loyalty rewards. | Professional dealers and high-quality streams create a real atmosphere. ] Check the latest rankings and start your journey today!

1win bookmaker http://www.1win38409.help

1win crash 1win37195.help

lucky jet игра mostbet https://mostbet18247.help/

express rx pharmacy and medical supplies how much does viagra cost at pharmacy online pharmacy ativan no prescription

mostbet domen o‘zgardi https://mostbet18401.help/

1win birinchi depozit bonusi http://www.1win87240.help

Рекомендую https://amurplanet.ru/

1win pe ios https://1win37195.help

mostbet как пополнить с карты mostbet18247.help

The current market of best live casinos Pakistan offers incredible opportunities for players. You can find a huge variety of slots and table games with high RTP. | Most sites provide mobile-friendly interfaces and 24/7 customer support. | Choosing a licensed operator ensures a fair and transparent gaming process. ] Check the latest rankings and start your journey today!

1win регистрация http://www.1win56893.help

мостбет автоматҳо mostbet19382.help

I trust this platform — withdrawals are robust security and reliable. The dashboard gives a complete view of my holdings.

The current market of online casinos in Pakistan offers incredible opportunities for players. The security of transactions and fast payouts are guaranteed on these platforms. | New users can often claim generous welcome packages and loyalty rewards. | Professional dealers and high-quality streams create a real atmosphere. ] Don’t miss your chance to win big on a trusted platform.

mostbet express stavka https://mostbet18401.help

1win xavfsizmi 1win xavfsizmi

1вин ставки на спорт https://www.1win56893.help

mostbet тасдиқи ҳисоб http://www.mostbet19382.help

mostbet tolov tizimlari http://mostbet18401.help

1win bepul crash 1win bepul crash

The site is easy to use and the stable performance keeps me coming back. My withdrawals were always smooth.

The current market of best Pakistani casino sites offers incredible opportunities for players. You can find a huge variety of slots and table games with high RTP. | New users can often claim generous welcome packages and loyalty rewards. | Choosing a licensed operator ensures a fair and transparent gaming process. ] Don’t miss your chance to win big on a trusted platform.

pin-up Uzcard depozit http://pinup39174.help

1вин вывод Киргизия 1вин вывод Киргизия

мостбет baccarat http://www.mostbet19382.help

Bonuses https://tornadocash.app

mostbet oyin ochilmayapti http://mostbet18401.help/

1win Payme orqali to‘lash http://www.1win87240.help

have a peek at this site https://bitmix.app

pin-up Surxondaryo http://www.pinup39174.help

Read Full Article https://yomix.biz

1win как пополнить Bakai Bank 1win как пополнить Bakai Bank

mostbet официальный сайт mostbet официальный сайт

find https://daobase.online

look these up https://daobase.online/

pin-up rasmiy saytga havola pinup39174.help

legal canadian pharmacy online cheapest pharmacy to fill prescriptions with insurance kamagra oral jelly online pharmacy

a knockout post https://letsexchange.cc

published here https://yomix.biz

you could try this out https://letsexchange.cc

pinup pul yechish ishlamayapti pinup39174.help

why not check here https://mixmy.money

Click Here https://daobase.info

cialis super active online pharmacy canadian online pharmacy viagra health express pharmacy+artane castle

mostbet azərbaycan app mostbet2015.help

melbet cash out melbet07892.help

mostbet telepítés engedélyezése http://mostbet2022.help

узнать больше Здесь дешевое продвижение РІ телеграм xyeta store

mostbet Azərbaycan üçün android mostbet Azərbaycan üçün android

Перейти на сайт https://xyeta.store/order/tiktok-prosmotry-dlya-prodvizeniya/prosmotry-dlya-video-120-sek-uderzhanie-60-garantiya

melbet expres melbet expres

mostbet push értesítések mostbet push értesítések

сайт https://xyeta.store/order/odnoklassniki-prosmotry/prosmotry-video

pinup rasmiy sayt pinup rasmiy sayt

другие дешевое продвижение РІ телеграм xyeta store

продолжить накрутка посетителей РІ инсте

такой https://xyeta.store/order/telegram-reakcii/miks-reaktsiy-100

каталог https://xyeta.store/order/telegram-reakcii/reaktsiya-100-prosmotry

pin up Click pin up Click

узнать больше https://xyeta.store/order/telegram-premium-privatnye-kanaly-bystrye/premium-busty-20-dney-bystrye-privatnye-kanaly

mostbet bonus necə alınır mostbet bonus necə alınır

посмотреть в этом разделе https://xyeta.store/order/trovo-zriteli/zriteli-1-den

Смотреть здесь https://xyeta.store/order/vk-proslusivaniya

узнать больше Здесь https://xyeta.store/order/trafik-na-sait/trafik-s-google

нажмите купить реакции РІ телеграм зевок просмотры

кликните сюда заказать читателей РІ телеграм

Подробнее здесь https://xyeta.store/order/wibes-prosmotry

узнать накрутка бустов для сервера РІ РґРёСЃРєРѕСЂРґРµ

mostbet kuponkód nem működik https://www.mostbet2022.help

melbet pariuri pe box https://www.melbet07892.help

mostbet atb mostbet2015.help

Следующая страница дешевое продвижение РІ тиктоке xyeta store

проверить сайт советы РїРѕ продвижению РіСЂСѓРїРїС‹ РІ РІРє

нажмите здесь https://xyeta.store/order/telegram-prosmotry/na-poslednie-200-postov

online casinos not on gamstop

посетить веб-сайт https://xyeta.store/order/spotify-proslushivaniya/proslushivaniya-treka-so-vsego-mira

пояснения заказать просмотры РІ телеграм медленно

mostbet blackjack https://mostbet2022.help

cote fotbal melbet cote fotbal melbet

mostbet pulsuz fırlanma https://www.mostbet2015.help

mostbet crash joc mostbet crash joc

1win ünvan 1win ünvan

mostbet zrušení výběru mostbet zrušení výběru

посмотреть в этом разделе https://xyeta.store/order/telegram-premium-podpisciki-targetirovannye/izrail-30-dney

нажмите здесь https://xyeta.store/order/telegram-reakcii/miks-reaktsiy-dove-strawberry-tree

здесь купить РЅР° ютубе

Подробнее здесь https://xyeta.store/order/twitch-zriteli/zriteli-15-min

mostbet apk yüklə mostbet apk yüklə

зайти на сайт https://xyeta.store/order/telegram-reakcii/reaktsiya-sob-prosmotry

aviator игра 1win https://www.1winkg.in.net

mostbet statistiky týmů http://mostbet32570.help

mostbet promo code http://mostbet40596.help/

1win qeydiyyat forması 1win qeydiyyat forması

ссылка на сайт раскрутка РІ тиктоке РЅРёР·РєРёР№ процент отписок

посетить веб-сайт https://xyeta.store/order/telegram-premium-busty-privatnye-kanaly-bystrye/premium-busty-1-den-bystrye-privatelye-kanaly

посетить веб-сайт https://xyeta.store/order/twitch-chat-boty/chat-boty-1-nedelya-svoy-tekst

melbet nu se deschide melbet nu se deschide

mostbet számla hitelesítés mostbet számla hitelesítés

найти это цены РЅР° просмотры РІ телеграм тут

Ссылки https://amurplanet.ru/fasonnye-soedinitelnye-elementy-dlya-trub-vchshg-vidy-naznachenie-i-podbor/

авиатор 1win отзывы http://1winkg.in.net/

pain meds online without doctor prescription buy naltrexone from trusted pharmacy legal online pharmacy coupon code

Статья https://thebachelor.ru/mufty-termousazhivaemye-dlya-trub-naznachenie-vybor-i-primenenie/

mostbet kifizetés visszautasítva https://mostbet2022.help/

melbet depunere cu webmoney https://www.melbet07892.help

посетить сайт https://xyeta.store/order/telegram-premium-podpisciki-targetirovannye/ssha-15-dney

Подробнее здесь https://xyeta.store/order/telegram-reakcii/reaktsiya-handshake-prosmotry

reliable online pharmacy accutane reputable online pharmacy cialis how much does percocet cost at the pharmacy

перенаправляется сюда ознакомиться СЃ ценами канала РІ тиктоке РєР·

mostbet bonus pentru casino https://mostbet40596.help/

1win pul çıxarma kartla https://1win64218.help/

mostbet akce a bonusy https://mostbet32570.help

страница РіРґРµ заказать лайки РІ тт

пояснения как накрутить зрителей РЅР° трово

бк 1win бк 1win

Ссылки https://amurplanet.ru/fasonnye-soedinitelnye-elementy-dlya-trub-vchshg-vidy-naznachenie-i-podbor/

сайт сайт xyeta.store продвижение ютуб канала

mostbet intrare rapidă https://mostbet40596.help/

mostbet zákaz bonusů http://mostbet32570.help/

1win plinko oyunu http://www.1win64218.help

ссылка на сайт хакерский форум

1win casino скачать 1win casino скачать

Следующая страница antminer s21

1win apk free download 1win apk free download

этот сайт asic майнер

Ссылки https://amurplanet.ru/fasonnye-soedinitelnye-elementy-dlya-trub-vchshg-vidy-naznachenie-i-podbor/

Следующая страница кибербез

mostbet calcul castig mostbet calcul castig

mostbet promo kód nefunguje http://mostbet32570.help/

1win kazino saytı 1win kazino saytı

Статья https://thebachelor.ru/mufty-termousazhivaemye-dlya-trub-naznachenie-vybor-i-primenenie/

1win slots tournament http://www.1win5527.ru

go to my blog telegram engagement automation

cum retrag de pe mostbet pe mastercard https://mostbet40596.help

mostbet výběr bankovním převodem mostbet výběr bankovním převodem

look at this now telegram advanced search software

сайт 1win отзывы сайт 1win отзывы

click to investigate Telegram Expert license

see Telegram Expert program

see this site telegram invite automation

his response fortnite tracker tool

you can try these out fortnite inventory worth checker

Homepage telegram automation for agencies

pop over to these guys telegram automation system

1вин россия http://www.1winkg.in.net

Web Site telegram chat autoposter

Website telegram channel scraper

click here for info telegram automation system

my company fortnite tracker free

Going Here telegram invite tool

Full Report fortnite season stats

learn this here now [url=https://en.telegramexpert.pro]telegram marketing software[/url]

next fortnite stats tracker free

click Telegram Expert program

1win login without app 1win login without app

go now fortnite locker

Check Out Your URL telegram neurocommenting

Full Article tool for telegram promotion

i was reading this telegram lead outreach tool

Статья https://thebachelor.ru/mufty-termousazhivaemye-dlya-trub-naznachenie-vybor-i-primenenie/

1win USDT çıxarış 1win64218.help

internet fortnite match tracker

I’ve been active for a year, mostly for exploring governance, and it’s always seamless withdrawals.

Learn More fortnite tracker free

1win deposit problem https://1win5527.ru

my website telegram group scraper

my site Telegram Expert buy

mines мостбет http://mostbet76480.help

слотҳои 1вин слотҳои 1вин

1win balance kg вывод 1win86307.help

index telegram channel growth software

have a peek here Telegram Expert documentation

canadian pharmacy viagra reviews metoprolol succinate online pharmacy cheapest pharmacy to get prescriptions filled

Bonuses fortnite competitive stats

blog here telegram bulk actions software

right here fortnite player tracker

click to read fortnite inventory value checker

1win baseball betting https://www.1win5527.ru

I value the clear transparency and responsive team. This site is reliable.

mines игра mostbet mostbet76480.help

1win статистика https://1win14675.help

1вин о деньги пополнение 1вин о деньги пополнение

check out this site telegram automation tool

why not try these out how to automate telegram marketing

use this link telegram invite automation

this content telegram growth software for business

see this website fortnite locker estimate

special info telegram message sender

1win login problem https://www.1win5527.ru

percocet online no prescription pharmacy express scripts com pharmacies generic zoloft online pharmacy no prescription

have a peek at this website fortnite locker cosmetics

blog link fortnite stat tracker

see this page fortnite locker skins

visit homepage telegram chat scraper

see this website fortnite locker

mostbet игра mines mostbet игра mines

1win дархости хуруҷ http://www.1win14675.help

1вин официальный сайт регистрация https://1win86307.help/

web link fortnite locker inventory checker

Visit This Link telegram automation tools list

Discover More Here fortnite season stats

next telegram comment bot

go now fortnite locker cosmetics

как скачать мостбет apk как скачать мостбет apk

1win бозиҳои демо 1win бозиҳои демо

1win ссылка на скачивание http://1win86307.help

view publisher site fortnite inventory checker online

go to the website telegram auto send messages to chats

visit their website fortnite stats online

go fortnite competitive stats

melbet лайв http://melbet74825.help/

mostbet bonus uz mostbet bonus uz

мостбет фора мостбет фора

more info here Telegram Expert

her latest blog fortnite stats online

click here to read Telegram Expert program

check out here telegram marketing tool comparison

directory fortnite inventory checker

melbet lucky jet как играть https://melbet74825.help

mostbet telefon raqami mostbet telefon raqami

find telegram message sender

mostbet бонус без депозита правда [url=https://mostbet73481.help/]mostbet бонус без депозита правда[/url]

1win возврат ставки https://www.1win86307.help

mostbet криптовалюта пополнение http://mostbet76480.help

1win натиҷаҳо 1win14675.help

click reference Telegram Expert features

click telegram automation global tool

1win легально ли в Киргизии http://www.1win86307.help

1win apk Тоҷикистон 1win14675.help

mostbet скачать для Казахстана https://www.mostbet76480.help

click now https://cleanthebit.com/fr/

go right here https://cleanthebit.com/fr/

visite site https://cleanthebit.com/

internet https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

Bonuses fortnite tracker free

that site fortnite tracker website

useful reference https://cleanthebit.com/ru/

see post fortnite account tracker

imp source https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

Related Site telegram group invite tool

next fortnite locker cosmetics

майнс melbet https://melbet74825.help

more helpful hints fortnite locker value checker

mostbet ilovasini yuklab olish mostbet ilovasini yuklab olish

read review Telegram Expert program

see here now https://cleanthebit.com/hi/

additional resources https://cleanthebit.com/ru/

here analyze fortnite inventory

best online pharmacy generic viagra simvastatin people pharmacy online pharmacy store hyderabad

have a peek at this website https://cleanthebit.com/fr/

go to this website check fortnite stats

websites telegram autoposting

useful site fortnite player stats

click for more fortnite tracker free

click here to find out more fortnite tracker

click here to read fortnite tracker online

Recommended Site https://cleanthebit.com/hi/

melbet регистрация http://melbet74825.help/

dutasteride from dr reddy’s or inhouse pharmacy naturxheal family pharmacy & health store-doral online pharmacy percocet no prescription

Recommended Reading fortnite tracker online

mostbet shikoyat mostbet shikoyat

mostbet букмекер mostbet73481.help

1win mastercard deposit https://1win5743.help

мостбет вход через зеркало http://www.mostbet71852.help

you can try here https://cleanthebit.com/ru/

this link https://cleanthebit.com/hi/

look at this now https://quarklab.ru/

check out here fortnite ranked stats

navigate to these guys fortnite competitive stats

click this link now analyze fortnite inventory

Discover More https://cleanthebit.com/hi/

m fortune https://m-fortune.info/

дог хаус играть онлайн дог хаус на the-dog-house-cloude.ru

1win plinko withdrawal 1win plinko withdrawal

mostbet как пройти kyc http://mostbet71852.help

melbet ошибка вывода https://melbet74825.help/

mostbet refund https://mostbet91372.help

This Site https://cleanthebit.com/fr/

site https://cleanthebit.com/

mines игра mostbet https://mostbet73481.help

look at this web-site https://cleanthebit.com/fr/

melbet как играть в crash melbet74825.help

mostbet qiwi depozit mostbet qiwi depozit

read more https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

navigate to this web-site https://quarklab.ru/

мостбет способы оплаты https://www.mostbet73481.help

pop over to this web-site https://quarklab.ru/fr/crypto-drainer/

Wood frame – Segunda residencia, Casas de madera

mostbet вход сегодня https://mostbet71852.help/

1win app for infinix http://www.1win5743.help

Diseño moderno – Carcasa de madera, Inversión inteligente

мостбет проверка документов https://mostbet73481.help/

use this link https://quarklab.ru/fr/crypto-drainer/

Construcción de casas – Montaje rapido y eficiente, Montaje rapido y eficiente

Inversión inteligente – Montaje rapido y eficiente, Alta calidad

Construcción industrializada – Construcción industrializada, Llave en mano

internet https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

mostbet официальный сайт https://mostbet71852.help/

1win support Uganda live chat 1win support Uganda live chat

Carcasa de madera – Bajo consumo energético, Inversión inteligente

find this https://cleanthebit.com/hi/

1win банковская карта вывод https://1win56183.help

1win lucky jet https://1win72951.help

1win contact email 1win contact email

1вин майнс http://1win56183.help

look at this now https://cleanthebit.com/zh/

lucky jet 1win [url=www.1win72951.help]www.1win72951.help[/url]

1win apk android 1win60823.help

check this https://cleanthebit.com/ru/

1win betting account Uganda http://1win5743.help/

как использовать бонус mostbet http://mostbet71852.help

Construcción de casas en Cataluña – Construcción de casas en Cataluña, Materiales naturales

Comprehensive overview of current guidelines for heart failure with reduced ejection fraction. Quadruple therapy has transformed prognosis. – https://archivo.infojardin.com/tema/palomina-tengo-kilos-y-kilos-de-defecaciones-de-paloma-sirve-como-abono.316645/ , The explanation of HER2-targeted therapies in breast cancer is clear and comprehensive. .

slots 1win http://1win5743.help/

mostbet ios приложение Кыргызстан http://www.mostbet71852.help

us pharmacy no prior prescription low dose naltrexone skip pharmacy how much does viagra cost at pharmacy

Montaje rapido y eficiente – Llave en mano, Alta calidad

смотреть здесь https://hpc.name/thread/e930/130074/opredelenie-shemy-i-sootnosheniy-po-vektornoy-diagramme.html

Recommended Reading https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

see it here https://cleanthebit.com/es/

узнать Кашпо из ротанга в Краснодаре и краем

1вин Киргизия 1вин Киргизия

можно проверить ЗДЕСЬ https://hpc.name/thread/c251/70511/komponenty-dlya-raboty-s-derevyami-v-c-builder.html

canadian pharmacy discount coupon canadian pharmacy viagra 100mg online pharmacy same day delivery

1вин ios http://1win72951.help/

continue reading this https://tornadocash.app

cum introduc cod bonus 1win http://1win60823.help/

Tripscan — это современная платформа, которая помогает компаниям эффективно управлять своими маршрутами и логистикой. Наш сервис предоставляет удобный интерфейс для планирования и отслеживания поездок, что значительно сокращает время и повышает точность выполнения задач.

[url=https://trip67c.cc ]трип скан

[/url]

Трип скан — это надежное решение, которое интегрируется с различными системами учета и автоматизации. Благодаря Trip scan вы сможете быстро анализировать маршруты, оптимизировать расходы и контролировать выполнение заказов в реальном времени.

[url=https://trip67c.cc ]трипскан вход

[/url]

Tripscan top — это наша эксклюзивная функция, которая позволяет пользователям получать наиболее актуальные данные о движении транспорта и эффективности маршрутов. Используйте трипскан вход для авторизации и доступа к расширенным возможностям платформы.

[url=https://trip67c.cc ]trip scan

[/url]

Для новых пользователей у нас есть удобный трипскан сайт, где можно ознакомиться с функционалом, зарегистрироваться и начать использовать сервис уже сегодня. Трипскан сайт обеспечивает простоту и безопасность работы с данными, а также поддержку на всех этапах.

[url=https://trip67c.cc ]tripscan

[/url]

Выбирайте Tripscan — ваш надежный партнер в сфере логистики и транспорта. Откройте новые горизонты с Tripscan top и убедитесь в эффективности нашего сервиса!

https://trip67c.cc

trip scanтрипскан входtripscan topтрип скантрипскан

1win мегапей пополнение 1win мегапей пополнение

Extra resources https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

find more info https://cleanthebit.com/ru/

смотреть здесь томск перетяжка мебели

1win официальный сайт скачать http://1win72951.help

1win apk download http://1win60823.help

1win как пополнить Optima Bank https://1win56183.help

mostbet depunere visa http://mostbet13829.help/

1win Кыргызстан 1win Кыргызстан

Bajo consumo energético – Materiales naturales, Wood frame

нажмите, чтобы подробнее https://hpc.name/thread/t970/96193/problemy-s-rabotoy-skriptov-v-react-prilojenii.html

helpful hints https://yomix.biz

Continued https://quarklab.ru/

Your Domain Name https://cleanthebit.com/hi/

1win Ош кирүү 1win Ош кирүү

mostbet update apk https://mostbet13829.help/

Carcasa de madera – Casas de madera, Construcción de casas en Cataluña

mines 1вин mines 1вин

1win bonus lunar http://1win60823.help

Carcasa de madera – Bajo consumo energético, Bajo consumo energético

подробнее https://hpc.name/thread/r212/103634/kak-ogranichit-dostup-k-polyam-obekta-v-actionscript-sozdanie-getterov-i-setterov.html

Casas de madera – Casas sostenibles, Casa eficiente

visit the website https://cleanthebit.com/es/

web https://swaplab.io/

image source https://cleanthebit.com/es/

1win вывод на карту сколько идет http://1win72951.help/

Inversión inteligente – Construcción de casas, Casas sostenibles

1win casino live Moldova https://1win60823.help

page https://cleanthebit.com/

Montaje rapido y eficiente – Montaje rapido y eficiente, Llave en mano

this link https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

click here for more info https://cleanthebit.com/fr/

1вин регистрация http://www.1win94317.help

mostbet descărcare ios https://mostbet13829.help

можно проверить ЗДЕСЬ Кашпо из искусственного ротанга

как сделать ставку в мостбет http://www.mostbet09654.help

Construcción de casas en Cataluña – Construcción industrializada, Casas sostenibles

look at this now https://daobase.online/

viagra online pharmacy no prescription online pharmacy no prior prescription online pharmacy discount code

1win cash out https://1win92486.help

1win ставки на теннис Кыргызстан https://1win56183.help

1win отзывы Киргизия http://1win94317.help/

mostbet login pe telefon mostbet login pe telefon

сюда Кашпо из искусственного ротанга

website here https://yomix.biz

mostbet регистрация через sms http://www.mostbet09654.help

visit this site https://yomix.biz

сайт https://hpc.name/thread/p761/100123/kak-peredat-dannye-metodom-post-na-drugoy-server-s-pomoshchyu-perl.html

плинко 1win 1win92486.help

go to this site https://swaplab.io

mostbet total tikish https://mostbet59371.help/

1win блэкджек 1win блэкджек

mostbet securitate plata http://mostbet13829.help/

перейти на сайт Кашпо из ротанга в Краснодаре и краем

этот контент https://hpc.name/thread/u490/103519/kak-vychislit-summu-elementov-spiska-esli-oni-obrazuyut-arifmeticheskuyu-progressiyu-v-ocaml.html

этот сайт https://hpc.name/thread/b702/140410/vnesenie-izmeneniy-vnutri-tablicy.html

mostbet kurs mostbet59371.help

Смотреть здесь https://hpc.name/thread/t390/102722/problemy-s-vypolneniem-lua-skriptov-v-igrovom-redaktore.html

1win букмекерская контора Бишкек https://1win94317.help

cum contactez chatul mostbet http://mostbet13829.help/

ссылка на сайт Кашпо из ротанга в Краснодаре и краем

мостбет служба поддержки http://mostbet09654.help

Llave en mano – Bajo consumo energético, Casa eficiente

click to investigate https://mixmy.money/

1вин промо код 1вин промо код

Продолжение https://hpc.name/thread/n521/125785/kak-pereklyuchat-raskladku-klaviatury-na-prestigio-multipad-pmp7280c3g-duo.html

visit this site right here https://cleanthebit.com/zh/

узнать больше Здесь https://soulestate.ru/catalog/novostroiki/

Продолжение Кашпо из ротанга

узнать больше Здесь томск перетяжка мебели

этот сайт Кашпо из ротанга

мостбет плинко играть мостбет плинко играть

mostbet mines strategiya https://www.mostbet59371.help

1win букмекер http://1win92486.help/

посетить веб-сайт https://soulestate.ru/catalog/vtorichnoe-jilio/grand-deluxe-na-plyuschikhe66146/

узнать больше Здесь томск перетяжка мебели

каталог https://soulestate.ru/catalog-object/

мостбет майнс http://www.mostbet09654.help

нажмите здесь https://soulestate.ru/catalog/vtorichnoe-jilio/polyanka-4466095/

Читать далее Кашпо из искусственного ротанга

mostbet bonus kod kiritish https://mostbet59371.help

mostbet вход на официальный сайт [url=http://mostbet09654.help/]http://mostbet09654.help/[/url]

learn the facts here now https://cleanthebit.com/ru/

1вин регистрация Киргизия https://1win92486.help

Подробнее здесь https://hpc.name/thread/f680/98199/kak-vybrat-cms-dlya-realizacii-platnogo-dostupa-k-polnym-versiyam-statey.html

посетить сайт Кашпо из искусственного ротанга

узнать томск перетяжка мебели

melbet элсом пополнение https://melbet45163.help/

mostbet aplikacja android http://mostbet2002.help

1win Ош https://www.1win92486.help

mostbet plinko statistika http://mostbet59371.help

i loved this https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

Подробнее https://hpc.name/thread/s621/115536/materinskaya-plata-vklyuchaetsya-na-5-sekund-i-vyklyuchaetsya.html

mostbet problem z pobraniem apk https://www.mostbet2002.help

read review https://cleanthebit.com/zh/

lucky jet мелбет http://melbet45163.help

mostbet yangi promo kod https://mostbet59371.help/

view it https://cleanthebit.com/fr/

Читать далее томск перетяжка мебели

содержание https://hpc.name/thread/x180/122203/gde-nayti-shemu-materinskoy-platy-dlya-toshiba-satellite-l350-6050a2170201-mb-a03-rev-2-01.html

узнать больше томск перетяжка мебели

melbet android app скачать melbet android app скачать

see here now https://cleanthebit.com/hi/

Продолжение https://hpc.name/thread/s282/142909/perevod-koda-iz-c-v-c.html

мелбет как играть в plinko https://melbet49375.help

he has a good point https://cleanthebit.com/ru/

melbet_kg https://melbet45163.help/

mostbet logowanie bukmacher https://www.mostbet2002.help

этот сайт https://hpc.name/thread/n120/144705/kak-dobavit-ssylku-ili-tegi-vokrug-vydelennogo-teksta-v-pole-textarea.html

visit the site https://cleanthebit.com/fr/

Подробнее https://soulestate.ru/commercia/catalog/rent_shoppingArea/

ссылка на сайт https://soulestate.ru/catalog/novostroiki/capital-towers66295/

подробнее здесь томск перетяжка мебели

mostbet bonus na esport https://mostbet2002.help

мелбет android app скачать https://melbet45163.help

игра плинко мелбет http://melbet49375.help

Click Here https://cleanthebit.com/es/

1win apk боргирӣ 1win apk боргирӣ

pin-up sign in http://pinup2010.help

1вин ставка https://www.1win26514.help

pin-up pul yatır pin-up pul yatır

mostbet bonus za rejestrację bez depozytu https://mostbet2002.help

мелбет скачать на телефон мелбет скачать на телефон

melbet логин https://www.melbet49375.help

mostbet kursy piłkarskie mostbet2002.help

index https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

visit this page https://cleanthebit.com/es/

find out https://cleanthebit.com/es/

melbet odengi пополнение https://www.melbet45163.help

visit https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

Hey there are using WordPress for your site platform? I’m new

to the blog world but I’m trying to get started and create

my own. Do you require any html coding knowledge to make

your own blog? Any help would be greatly appreciated!

view website https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

click over here now https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

Смотреть здесь https://hpc.name/thread/o560/142823/vychislenie-lineynogo-vyrajeniya.html

click now https://cleanthebit.com/fr/

helpful hints https://cleanthebit.com/fr/

website link https://quarklab.ru/es/drenador-de-cripto/

Learn More https://cleanthebit.com/zh/

official website https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

melbet вход без пароля http://melbet49375.help

1win cashout 1win cashout

можно проверить ЗДЕСЬ https://hpc.name/thread/x660/100215/ispolzovanie-modifikatora-u-v-regulyarnyh-vyrajeniyah-v-perl.html

pin-up bonus veyceri nə qədərdir pin-up bonus veyceri nə qədərdir

Discover More Here https://cleanthebit.com/es/

webpage https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

click this link here now https://cleanthebit.com/zh/

click here for more https://cleanthebit.com/es/

click here now https://cleanthebit.com/zh/

browse this site https://cleanthebit.com/es/

hop over to this website https://cleanthebit.com/zh/

мелбет как вывести на odengi http://melbet49375.help

Our site https://cleanthebit.com/fr/

read what he said https://cleanthebit.com/

check that https://cleanthebit.com/

navigate to this website https://cleanthebit.com/ru/

1win селфи тасдиқ http://1win26514.help

pin-up tək mərc https://www.pinup2010.help

visite site https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

Go Here https://cleanthebit.com/ru/

her comment is here https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

this link https://quarklab.ru/fr/crypto-drainer/

find out this here https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

browse around these guys https://quarklab.ru/es/drenador-de-cripto/

read https://quarklab.ru/

Innovative approach to smoking cessation using personalized nicotine replacement. Digital health tools can enhance compliance. – https://careconnectclinic.com/ , Balanced discussion on the benefits and limitations of wearable health technology. .

find more information https://quarklab.ru/es/drenador-de-cripto/

navigate to this website https://quarklab.ru/fr/crypto-drainer/

sites https://quarklab.ru/es/drenador-de-cripto/

Подробнее https://hpc.name/thread/e051/142919/malenkaya-stiralnaya-mashinka-dlya-dachi-sovety-po-vyboru.html

click over here https://quarklab.ru/fr/crypto-drainer/

hop over to this web-site https://cleanthebit.com/fr/

my explanation https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

mostbet ссылка на зеркало http://mostbet15384.help

как играть в mines на 1win https://www.1win59801.help

mostbet sign in account http://www.mostbet94827.help

перенаправляется сюда https://hpc.name/thread/j691/136996/kak-izmenyat-polojenie-kontrolov-na-stranice-v-visual-studio.html

view website https://cleanthebit.com/hi/

check over here https://cleanthebit.com/

look here https://cleanthebit.com/hi/

1win пул гузоштан http://1win26514.help/

pin-up poker oynamaq pinup2010.help

click here to investigate https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

visit the website https://cleanthebit.com/zh/

other https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

Going Here https://cleanthebit.com/zh/

mostbet apk не устанавливается mostbet apk не устанавливается

1вин Узбекистан официальный сайт https://1win59801.help

mostbet complaint https://mostbet94827.help/

Смотреть здесь https://hpc.name/thread/k060/77093/izmenenie-yazyka-interfeysa-i-ustanovka-apache-netbeans-inkubacionnyy-11-0-na-windows.html

this content https://cleanthebit.com/es/

my latest blog post https://quarklab.ru/

see this site https://quarklab.ru/

try this site https://cleanthebit.com/fr/

get redirected here https://cleanthebit.com/zh/

1win кэшбэк моҳона https://www.1win26514.help

pin-up slot qaydaları pin-up https://www.pinup2010.help

anchor https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

Перейти на сайт https://hpc.name/thread/j781/89189/algoritm-deleniya-s-ostatkom-v-micro.html

More Info https://cleanthebit.com/zh/

Homepage https://cleanthebit.com/fr/

great site https://cleanthebit.com/fr/

go to my site https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

Check Out Your URL https://quarklab.ru/es/drenador-de-cripto/

have a peek at these guys https://quarklab.ru/es/drenador-de-cripto/

check over here https://cleanthebit.com/ru/

промокод мостбет при регистрации http://mostbet15384.help

1win пополнить счет https://1win59801.help/

mostbet wagering mostbet wagering

her comment is here https://cleanthebit.com/es/

try these out https://quarklab.ru/fr/crypto-drainer/

informative post https://cleanthebit.com/zh/

1win букмекерская контора Бишкек 1win букмекерская контора Бишкек

weblink https://cleanthebit.com/

from this source https://cleanthebit.com/fr/

lucky jet игра mostbet lucky jet игра mostbet

mostbet mirror today mostbet mirror today

1вин платежные методы https://1win59801.help/

look at here https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

go to my blog https://cleanthebit.com/fr/

1вин лаки джет http://www.1win68503.help

learn the facts here now https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

мелбет депозит с карты мир https://melbet94130.help

my site https://quarklab.ru/es/drenador-de-cripto/

Full Report https://cleanthebit.com/ru/

her response https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

most bet sportsbook http://mostbet94827.help

mostbet бонусы казино mostbet бонусы казино

1win plinko играть 1win plinko играть

why not try these out https://quarklab.ru/

i thought about this https://quarklab.ru/

melbet бонус на первый депозит melbet бонус на первый депозит

continue reading this https://quarklab.ru/

a knockout post https://quarklab.ru/es/drenador-de-cripto/

мостбет бонус на первый депозит условия http://mostbet15384.help

1вин Uzum Bank пополнение 1вин Uzum Bank пополнение

mostbet verification mostbet verification

1win слоты на деньги 1win слоты на деньги

Visit Your URL https://cleanthebit.com/zh/

view publisher site https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

like it https://quarklab.ru/fr/crypto-drainer/

click resources https://quarklab.ru/es/drenador-de-cripto/

site link https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

1вин зеркало Бишкек https://1win68503.help

melbet russia http://melbet94130.help

navigate to this website https://quarklab.ru/

useful link https://cleanthebit.com/

see this here https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

over here https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

advice https://quarklab.ru/fr/crypto-drainer/

click to investigate https://quarklab.ru/fr/crypto-drainer/

blog https://cleanthebit.com/

click here for more https://quarklab.ru/fr/crypto-drainer/

1win одиночная ставка http://1win68503.help

his comment is here https://cleanthebit.com/zh/

Get More Information https://cleanthebit.com/

мелбет чаро барориш намешавад http://melbet39704.help/

aviator how to deposit airtel money http://www.aviator67093.help

vavada depozit https://vavada2008.help/

anchor https://cleanthebit.com/zh/

бездепозитный бонус melbet бездепозитный бонус melbet

browse around this web-site https://cleanthebit.com/es/

1win история транзакций http://www.1win68503.help

this link https://quarklab.ru/

aviator terms and conditions https://aviator67093.help/

vavada kladionica prijava https://vavada2008.help/

melbet lucky jet стратегия http://melbet39704.help

check it out https://cleanthebit.com/fr/

i thought about this https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

нажмите https://hpc.name/thread/i712/127968/pochemu-telefon-otklyuchaetsya-pri-podklyuchenii-cherez-usb-koncentrator-i-kak-eto-ispravit.html

review https://quarklab.ru/ru/%d0%ba%d1%80%d0%b8%d0%bf%d1%82%d0%be-%d0%b4%d1%80%d0%b5%d0%b9%d0%bd%d0%b5%d1%80/

мелбет ставки на баскетбол http://melbet94130.help

перейдите на этот сайт https://hpc.name/thread/q970/128310/postroenie-funkcionalnoy-shemy-upravlyayushchego-avtomata-v-zadannom-bazise-logicheskih-elementov.html

Смотреть здесь https://hpc.name/thread/p410/97512/kak-uluchshit-poryadok-koda-v-vue-router-dlya-luchshey-chitabelnosti.html

мелбет отзывы мелбет отзывы

websites https://cleanthebit.com/fr/

aviator lucky jet app Malawi aviator67093.help

мелбет коди промо http://www.melbet39704.help

visite site https://cleanthebit.com/hi/

investigate this site https://cleanthebit.com/hi/

here https://cleanthebit.com/es/

Get More Information https://quarklab.ru/es/drenador-de-cripto/

Clicking Here https://quarklab.ru/

aviator reset password http://aviator67093.help

мелбет барориш дар интизорӣ melbet39704.help

vavada bonus za registraciju http://vavada2008.help/

great site https://quarklab.ru/zh/%e5%8a%a0%e5%af%86%e7%9b%97%e7%aa%83%e5%b7%a5%e5%85%b7/

this website https://cleanthebit.com/zh/

мелбет фриспины как получить http://melbet73624.help

vavada apple pay vavada apple pay

melbet retrait sur mobile money https://www.melbet04739.help

here are the findings https://cleanthebit.com/ru/

aviator reload bonus aviator reload bonus

мелбет apk Тоҷикистон https://melbet39704.help

melbet приложение https://www.melbet73624.help

comment faire un retrait sur melbet https://melbet04739.help

jak grać w plinko na vavada jak grać w plinko na vavada

vavada free spins https://vavada2008.help/

aviator how to deposit airtel money http://aviator67093.help

мелбет ҳуҷҷат бор кардан https://www.melbet39704.help

Смотреть здесь купить травматический пистолет

можно проверить ЗДЕСЬ

читы unturned

смотреть здесь купить травмат без лицезии

check here https://smmpanel.name/

click here to investigate https://smmpanel.ooo

Смотреть здесь трипскан сайт вход

найти это сайт трип скан

vavada kladionica vavada2008.help

нажмите, чтобы подробнее

читы сталкрафт

можно проверить ЗДЕСЬ https://vpn-one.net/

нажмите, чтобы подробнее трип скан официальный сайт

you could try this out https://smmpanel.one/

Узнать больше https://t.me/s/mounjaro_tirzepatide/

посмотреть в этом разделе

читы сталкрафт

vavada ocena vavada ocena

мелбет новое зеркало мелбет новое зеркало

melbet app avis ci melbet app avis ci

узнать больше https://t.me/s/mounjaro_tirzepatide

plinko vavada https://vavada2008.help/

узнать tripscan сайт

click here to read https://smmpanel.one

нажмите здесь травмат пистолет Санкт-Петербург

страница

читы squad

mostbet Fargʻona mostbet38506.help

official site https://smmpanel.ooo/

go right here https://smmpanel.name

интернет травматическое оружие купить

why not find out more https://smmpanel.su/

additional hints https://smmpanel.one

в этом разделе https://t.me/s/mounjaro_tirzepatide/

vavada odblokowanie konta vavada2001.help

melbet lucky jet mobile http://www.melbet04739.help

melbet казино https://melbet73624.help

нажмите

читы arc raiders

mostbet payme yechish Oʻzbekiston https://www.mostbet38506.help

More hints https://smmpanel.one/

my sources https://smmpanel.name

reference https://smmpanel.ooo

Подробнее https://t.me/s/mounjaro_tirzepatide

нажмите здесь

читы пабг мобайл

browse around this website https://smmpanel.one

description https://smmpanel.one/

содержание https://t.me/vpnsmmone

additional reading https://smmpanel.one

check out the post right here https://smmpanel.su/

vavada cash out https://vavada2001.help/

melbet programme vip http://melbet04739.help/

мелбет приложение melbet73624.help

интернет tripscan сайт

Read Full Report https://smmpanel.su/

здесь сайт трип скан

index https://smmpanel.one

взгляните на сайте здесь травматическое оружие СПБ

1win çıxarış səhifəsi https://www.1win81936.help

vavada regulamin http://vavada2001.help/

melbet mobile http://www.melbet04739.help

melbet кэшбэк киргизия melbet кэшбэк киргизия

mostbet depozit qoidalari mostbet38506.help

1win plinko demo necə açılır 1win plinko demo necə açılır

узнать больше Здесь магазин травмат ЕКБ

пояснения травматический пистолет цена

веб-сайте купить травмат Махачкала

try this site https://smmpanel.su/

pop over here https://smmpanel.su/

узнать больше Здесь

читы mobile legends bang bang

more tips here https://smmpanel.one

Check This Out https://smmpanel.su

интернет https://t.me/s/mounjaro_tirzepatide/

нажмите здесь https://t.me/s/mounjaro_tirzepatide/

click here for more https://smmpanel.ooo/

подробнее https://t.me/s/mounjaro_tirzepatide/

mostbet yuklab olish telefon https://mostbet38506.help

нажмите травмат пистолет Екатеринбург

перенаправляется сюда

читы arma reforger

этот сайт https://vpn-one.net/

use this link https://smmpanel.one

ссылка на сайт https://t.me/s/mounjaro_tirzepatide/

сайт

читы dead by daylight

i was reading this https://smmpanel.su

1win veyjer talabi http://www.1win49027.help

melbet crash online https://www.melbet63149.help

сайт трипскан официальный сайт

see here https://smmpanel.ooo

взгляните на сайте здесь https://t.me/s/mounjaro_tirzepatide

ссылка на сайт

читы deadside

кликните сюда сайт трипскан

A federal judge has ordered the release of 5-year-old Liam Conejo Ramos and his father from the South Texas Family Residential Center in Dilley, Texas, according to a ruling obtained by CNN.

[url=https://megaweb11at.com]mgmarket6.at[/url]

Liam and his father, Adrian, were taken by immigration agents from his snowy suburban Minneapolis driveway and sent 1,300 miles to a Texas detention facility designed to detain families. They have been detained for more than a week.

[url=https://mega-sb.net]mgmarket6.at[/url]

The order specifies the preschooler and his father be released “as soon as practicable” and no later than Tuesday as their immigration case proceeds through the court system. The ruling, shared with CNN by the judge’s courtroom deputy, was first reported by the San Antonio Express-News.

“We are now working closely with our clients and their family to ensure a safe and timely reunion,” the family’s lawyers said in a Saturday statement. “We are pleased that the family will now be able to focus on being together and finding some peace after this traumatic ordeal.”

[url=https://mgmarket7.net]mega2ousbpnmmput4tiyu4oa4mjck2icier52ud6lmgrhzlikrxmysid.onion[/url]

Related article

Immigrants seeking asylum walk at the ICE South Texas Family Residential Center on Aug. 23, 2019, in Dilley, Texas.

READ: District judge’s scathing opinion ordering release of 5-year-old Liam Ramos and father

[url=https://mgmarket6.net]mgmarket6.at[/url]

1 min read

In a scathing opinion, which at times read more like a civics lesson, US District Judge Fred Biery admonished “the government’s ignorance of an American historical document called the Declaration of Independence” and quoted Thomas Jefferson’s grievances against “a would-be authoritarian king,” saying today people “are hearing echos of that history.”

[url=https://megaweb-11at.com]mega2oakke6o6mya3lte64b4d3mrq2ohz6waamfmszcfjhayszqhchqd.onion[/url]

Liam’s detention – and the striking photo of an agent clutching the boy’s Spider-Man backpack as he stared from under a cartoon bunny hat – fed mounting outrage over the Trump administration’s massive immigration crackdown in Minneapolis and renewed the question: What happens to children when their parents are abruptly taken by ICE?

In another diversion from the norms of judicial writing, the judge included the now famous image of Liam at the end of his opinion, under his signature, along with references to the Bible passages Matthew 19:14 and John 11:35.

Liam’s case, Biery wrote, originated in “the ill-conceived and incompetently-implemented government pursuit of daily deportation quotas, apparently even if it requires traumatizing children.”

“Observing human behavior confirms that for some among us, the perfidious lust for unbridled power and the imposition of cruelty in its quest know no bounds and are bereft of human decency,” wrote the judge. “And the rule of law be damned.”

mgmarket 6at

https://mega2ousbpnmmput4tiyu4oa4mjck2icier52ud6lmgrhzlikrxmysid.com

1win bütün cihazlardan çıxış 1win bütün cihazlardan çıxış

перейдите на этот сайт https://t.me/vpnsmmone/

взгляните на сайте здесь трип скан

navigate to this site https://smmpanel.ooo

1win manzil https://1win49027.help/

melbet cote fotbal http://melbet63149.help

mostbet hisob tekshiruvda https://mostbet38506.help/

можно проверить ЗДЕСЬ трипскан официальный сайт

такой

варзон чит

read this article https://smmpanel.ooo/

подробнее здесь tripscan зеркало

здесь трип скан официальный

click here now https://smmpanel.ooo

mostbet sign up https://www.mostbet38506.help

посетить сайт травмат пистолет Екатеринбург

Источник https://vpn-one.net/

find more https://smmpanel.su/

Home Page https://smmpanel.one

hop over to here https://smmpanel.name/

1win depozit səhifəsi 1win depozit səhifəsi

reference https://smmpanel.name/

узнать больше травмат пистолет Санкт-Петербург

Get the facts https://smmpanel.su/

find here https://smmpanel.one/

Подробнее трип скан вход

helpful hints https://smmpanel.one/

1win demo kazino 1win demo kazino

important source https://smmpanel.one/

melbet crash online https://melbet63149.help

click this link now https://smmpanel.name

нажмите здесь

wizecheats

Источник

читы дейз

you could try these out https://smmpanel.name/

1win yeni hesab 1win yeni hesab

веб-сайт легальное оружие для самообороны

нажмите https://t.me/s/mounjaro_tirzepatide

Продолжение

читы unturned

visite site https://smmpanel.name

Thank you for your sharing. I am worried that I lack creative ideas. It is your article that makes me full of hope. Thank you. But, I have a question, can you help me?

view publisher site https://smmpanel.ooo/

check these guys out https://smmpanel.one

1win bank köçürməsi Azərbaycan https://1win81936.help

можно проверить ЗДЕСЬ оружие самообороны Махачкала

нажмите https://t.me/s/mounjaro_tirzepatide/

1win yuklab olish 1win49027.help

посмотреть в этом разделе https://t.me/s/mounjaro_tirzepatide

melbet comision 0 http://melbet63149.help/

Подробнее магазин травмат СПБ

другие трипскан сайт вход

1win betting account Uganda https://1win42605.help/

sweet bonanza підтвердження акаунта sweet-bonanza27450.help

1win backup link 1win backup link

1win app 2026 https://www.1win49027.help

other https://smmpanel.su/

cum descarc melbet http://melbet63149.help

1win reload bonus Uganda https://1win42605.help

1win new mirror 1win new mirror

sweet bonanza mines вхід sweet bonanza mines вхід

1win ishlaydigan link 1win49027.help

melbet bingo http://www.melbet63149.help

discover this info here https://smmpanel.name

в этом разделе https://t.me/vpnsmmone/

здесь https://t.me/vpnsmmone

подробнее здесь https://vpn-one.net

Our site https://smmpanel.su/

sweet bonanza на гривні sweet bonanza на гривні

1win promo code not working Uganda 1win42605.help

1win android installation https://1win5528.ru/

viagra 100mg can male viagra work on females viagra tablets

visit the website https://smmpanel.su

article source https://smmpanel.ooo

посетить веб-сайт https://vpn-one.net/

mostbet instalare fără google play http://mostbet87342.help/

click to find out more https://smmpanel.su/

1win baccarat 1win baccarat

світ бонанза android світ бонанза android

1win live chat in app http://www.1win42605.help

mostbet transfer bancar Moldova http://mostbet87342.help/

try this website https://smmpanel.ooo/

website link https://smmpanel.su/

узнать https://t.me/vpnsmmone

you can look here https://smmpanel.name

этот контент https://vpn-one.net/

This has to be one of my favorite posts! And on top of thats its also very helpful topic for newbies. thank a lot for the information!

1win account blocked http://1win42605.help/

світ бонанза Україна вхід http://sweet-bonanza27450.help

how to bet on 1win how to bet on 1win

Continued https://smmpanel.ooo/

Visit Website https://smmpanel.su/

try this site https://smmpanel.ooo

he said https://smmpanel.su/

посетить сайт Сайты даркнет

посмотреть в этом разделе Даркнет сайты

Главная https://t.me/vpnsmmone

в этом разделе https://t.me/vpnsmmone

mostbet mirror Moldova mostbet mirror Moldova

1win telegram link 1win telegram link

1win android apk free 1win android apk free

sweet bonanza завантаження без реєстрації http://sweet-bonanza27450.help/

try this web-site https://smmpanel.one/

Dàn Dealer chuyên nghiệp đến từ Châu Âu và Châu Á chắc chắn sẽ mang đến cho bạn những giây phút thăng hoa giải trí tuyệt vời. 188V 200+ Studio được phát sóng trực tiếp mỗi ngày cho bạn thoải mái tham gia và nhận thưởng bonus với hoa hồng hấp dẫn khi giành chiến thắng. TONY05-07

взгляните на сайте здесь Сайты даркнет

посмотреть в этом разделе https://t.me/vpnsmmone/

узнать больше Тор ссылки